Traceable Defense AI M8 Released

Traceable development is on a 6 week release cycle. We call those milestones and they roll up features and fixes that have been completed over that 6 week timeframe. This blog series will focus on describing the big and medium rocks that are included in each milestone release.

The M8 release adds a lot of great capabilities that our customers have been asking for. Not only does it make the deployment process easier, but it also extends the agent support for more technologies, extends the amount of API Intelligence information, and makes it easier to find risks that should be addressed. Additional improvements include several big additions to our protection abilities, an improved user experience around threat hunting, and the ability to isolate your data and views per environment!

Whew! Lot’s of great additions. Let’s take a closer look. . .

Easier to install agents, and improved coverage

With more customers deploying Traceable, we have encountered the need to be more explicit and consistent about naming the individual elements that constitute Traceable. On the customer side, our clients will need to implement Traceable modules to collect the data. There, the customers can choose from in-app language modules or proxy modules. To manage these individual modules, redact sensitive data, and ensure reliable communications between the customer infrastructure and Traceable AI platform, customers would install one or more Traceable-agents.

Agent deployment

The Traceable-agent is a new container image which brings many components together into a single image to make deployments easier (we are still working on the non-containerized consolidated installation). The Traceable-agent is the gateway into the Traceable AI SaaS platform from each customer environment. The combined components include the services for pre-processing, session management, and a new “module and blocking management” service.

Responsibilities of this new single packaged Traceable-agent are

- pre-processing (such as sensitive data redaction, and user attribution before data leaves the customer site, and other processing that happens at the agent level)

- managing the session and transferring data between Traceable and the customer environments

- Module and blocking management (see next bullet for more details)

The new Traceable-agent is currently in RC (Release candidate) in final production testing and is expected to be fully available by March 15th.

The new Traceable-agent can now also be deployed using Terraform making it easier to deploy as Terraform is a popular deployment tool.

Module and Blocking Management

A new capability has been added for module and blocking management. This new capability is a step towards centralized management and monitoring of the customer-side modules from the UI.

Module and blocking management will manage the different Traceable instrumentation modules including:

- In-app modules: Java, Golang

- Proxy modules: NGINX, Envoy, Ambassador

Agent compatibility

The Traceable-agent is now 100% compatible with OpenTelemetry (OTel), which will enable interoperability with other distributed tracing and performance monitoring tools. Just as important, this means that the Traceable-agent now adheres to the newer OTel data collection standard which is more scalable and has better context awareness compared to the previously used OpenCensus standard.

Module functionality

We have added module coverage for Ambassador (in beta). Ambassador is a popular Envoy-based gateway optimized for distributed applications. The addition of the Ambassador-based data collection will help support newer cloud environments at our customers.

The new module capabilities include:

- Request capture and analysis

- Signature-based & IP range-based blocking

- Rate limiting

Added blocking in Golang (in beta. Currently manual install.)

Added signature based blocking in Envoy.

API Discovery and Risk Management

API Risk Score

The API Risk Score is a single numeric score which combines the likelihood that an API endpoint might get attacked with the possible exposure if such an attack is successful. This feature has now been moved out from behind the feature flag for all to use.

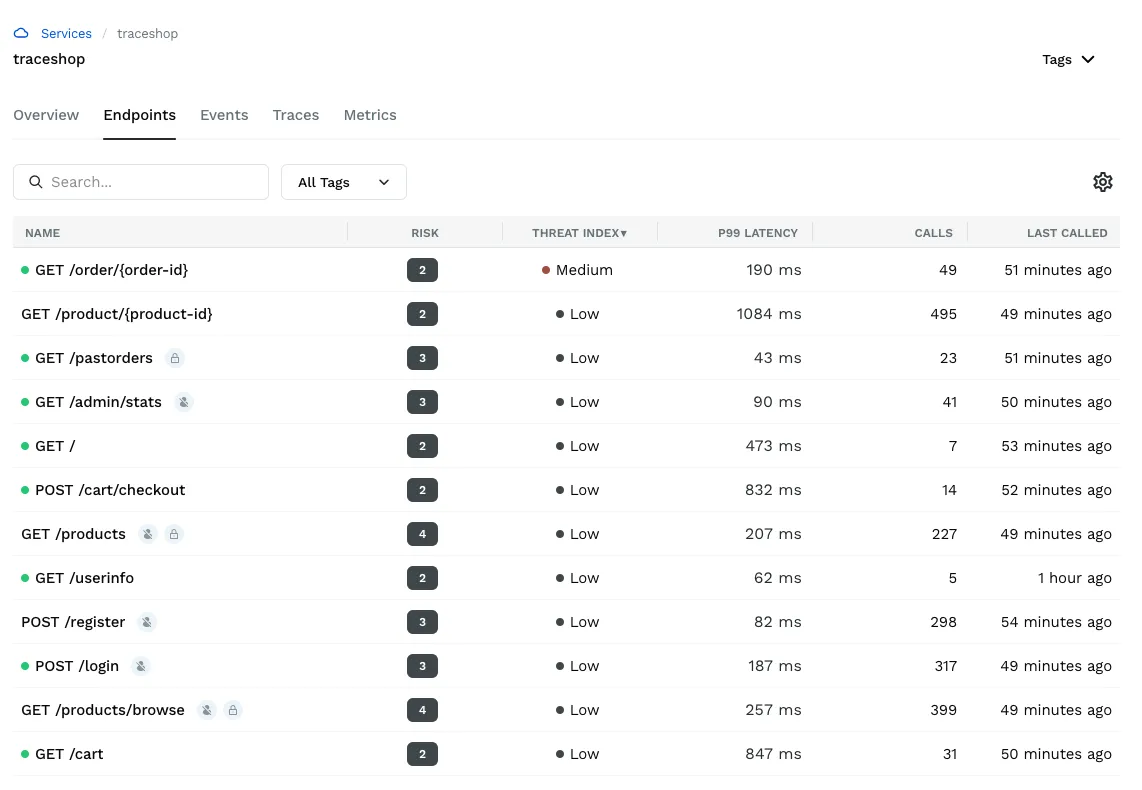

API Threat Index

The API Threat Index has been added and is a category indicator (low, med, high) for each API endpoint. It clearly identifies if there are unmitigated high severity attack attempts against the API endpoint in the reported time period. This makes it easier for security analysts to more efficiently find the API endpoints that they need to pay attention to.

Detection of orphan APIs

Orphaned endpoints are defined as APIs endpoints that have not received any traffic for a long time. Traceable Defense AI tracks and displays last called time for every API endpoint, making it easier to find and review orphans. Orphaned API detection helps eliminate risks in conjunction with the obsolete APIs that are no longer used or maintained but can potentially still provide access that someone shouldn’t have.

API Risk Unified View

In the API risk unified view a DevSecOps user or a security manager can quickly see all the discovered APIs and prioritize those APIs that are high risk. Further, APIs endpoints that are actively being attacked can be singled out without analyzing hundreds of individual anomalies. This helps increase the efficiency of teams responsible for staying on top of the security of their applications and APIs.

Protection

The latest M8 release continues to focus on enhancing the different ways Traceable can better protect your web applications and APIs.

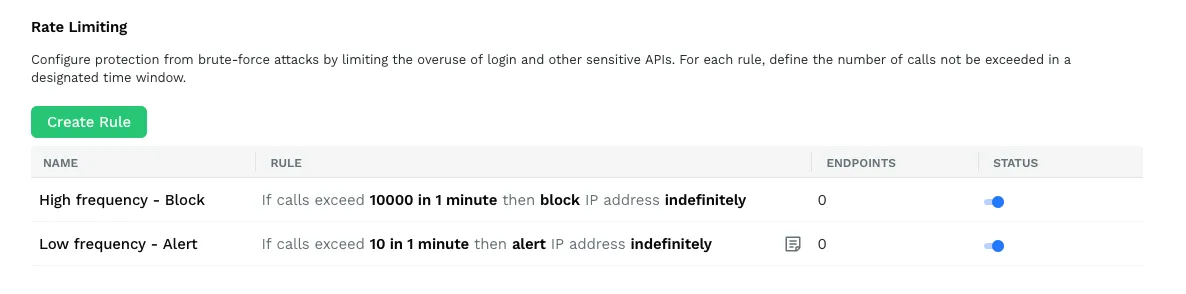

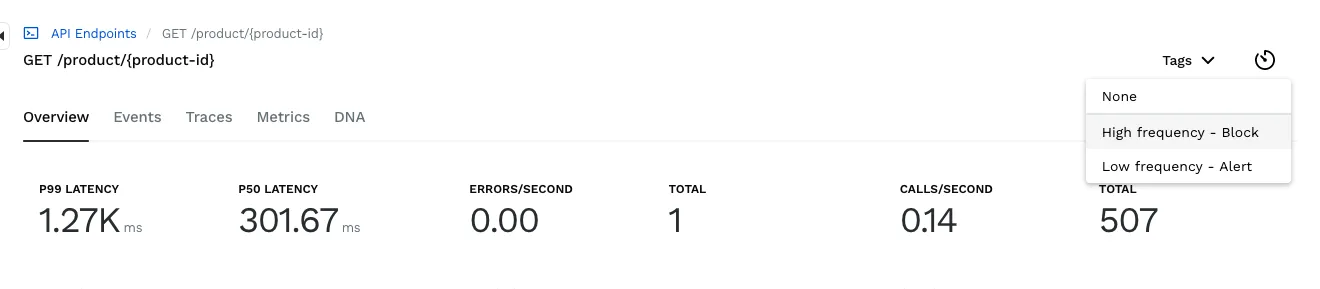

Rate limiting rules

Rate limiting helps prevent ATO and brute force attacks on login and similar APIs. It is one of the primary reasons that WAFs are used for today. Rate limiting helps Traceable Defense AI to protect against attacks classified under “API4:2019 – Lack of resources and rate limiting” and “API10-2019: Insufficient logging and monitoring” such as “Unusual logins”, “Unusual Signups”, “Unusual Password Resets”.

The current implementation gives customers the ability to say for which API’s they would like to set a rate limit to prevent overuse of the API. Based on these definitions Traceable Defense AI will block traffic once acceptable rates are exceeded for that API.

Server Side Request Forgery (SSRF) detection (beta)

Server Side Request Forgery is one of the popular attacks that can result in a service compromise. In an SSRF attack the attacker abuses functionality on the server side to read and/or update internal resources that they should not have access to, potentially on the server itself. In some cases this attack can lead to arbitrary command execution. Traceable Defense AI now blocks this attack.

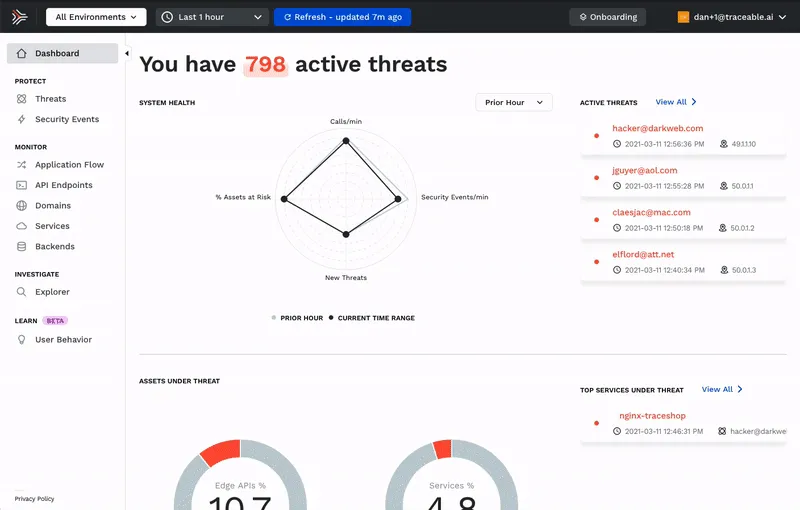

Security events now direct linked to explore user activity

Traceable Defense AI collects all the trace data surrounding security events and the users activities and around them. Now, when looking at a Security Event you can directly link to the explorer screen to investigate all the activities of all the users involved in the event in question.

This ability not only makes it faster and easier for security analysts to hunt for threats and identify root causes, but also showcases the power of the full session context awareness.

Enterprise Readiness

Environment separation

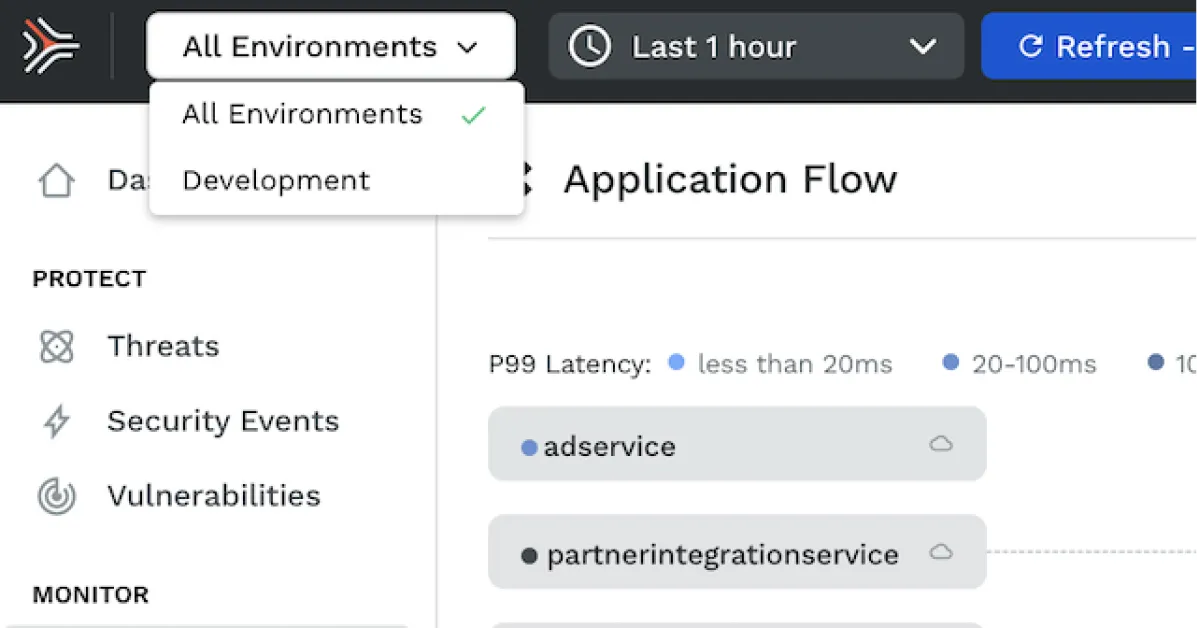

Traceable Defense AI now includes the ability for customers to separate the data and the visibility for different environments. This can be used to separate data and visibility between the different environments that represent multiple stages in SDLC (eg. dev, staging, prod). The feature is versatile and can also be used to separate data and services that belong to multiple tenants or BUs.

The Inside Trace

Subscribe for expert insights on application security.

.avif)